Analyst report: What CTOs must know about Kubernetes and containers

Containers and Kubernetes are an essential part of cloud native architecture. Read about Gartner’s recommendations for adoption and ROI.

On: Mar 14, 2023

Jess is a Technical Content Writer on the content marketing team at Chronosphere. She has over a decade of experience writing, editing, and managing content for B2B technology brands. Prior to Chronosphere, she worked at TechTarget covering data center, virtualization, and IoT technology. She currently resides in Seattle and is a trivia enthusiast.

Cloud native applications and microservices are essential for any modern business to effectively run. But tech executives can’t just buy off-the-shelf solutions or lift-and-shift legacy infrastructure into cloud native architectures without planning.

Engineers need the right tools, teams, and skills, yet it can be tough to know which tools to purchase, implement and how to calculate the return on investment (ROI).

The Gartner report, “Gartner® Report: a CTO’s Guide to Navigating the Cloud-native Container Ecosystem,” states containers and Kubernetes have emerged as prominent platform technology for building cloud native apps and modernizing legacy workloads. By 2027, more than 90% of global organizations will be running containerized applications in production, which is a significant increase from fewer than 40% in 2021.

The authors also write that “enterprises face challenges in accurately measuring the ROI of their cloud native investments and in creating the right organizational structure for it to flourish.”

Here’s what enterprises should know about containers and Kubernetes, their main use cases, and how they help run cloud native architecture.

What are containers, Kubernetes and their use cases?

Containers are packages of application code bundled together. Kubernetes is a platform that helps manage containers.

These technologies are commonly used for microserves, application portability and to reduce the risk of lock-in. They also enable DevOps workflows and legacy application modernization. Any company that decides to go cloud native or upgrade its infrastructure must use both containers and Kubernetes.

According to Gartner, ideal applications for containers and Kubernetes have:

- A low degree of external application dependencies.

- Container images for the supporting application’s infrastructure and platform technologies.

- Rapid elasticity needs and frequent code changes.

- A vendor-supported image for any commercial off-the-shelf (COTS) application deployment.

How well does the industry support these options?

Most container images are based on open source software. A container image is the static file that holds all the executable code to create a container within a computing system.

The report highlights that compared to open source software — where container support is already commonplace — the COTS application container support has much slower growth and greatly varies by vendor.

“While some COTS ISVs [independent software vendors] strategically provide strong support for Kubernetes, such as IBM, many COTS ISVs haven’t supported yet — especially in Windows-based or enterprise business applications. You should review container support strategy and roadmaps of their strategic COTS ISVs,” the authors write.

Still, Gartner notes an increasing number of vendors are developing container support and “more ISVs are enabling deeper integrations with containers/Kubernetes than just providing container images.”

The report highlights that AWS Marketplace for Containers has 524 container-related entries as of February 2022, 64% up from 320 in February 2020.

The industry trends emerging around Kubernetes and containers include VM convergence, stateful application support, edge computing, serverless convergence, and application workflow automation.

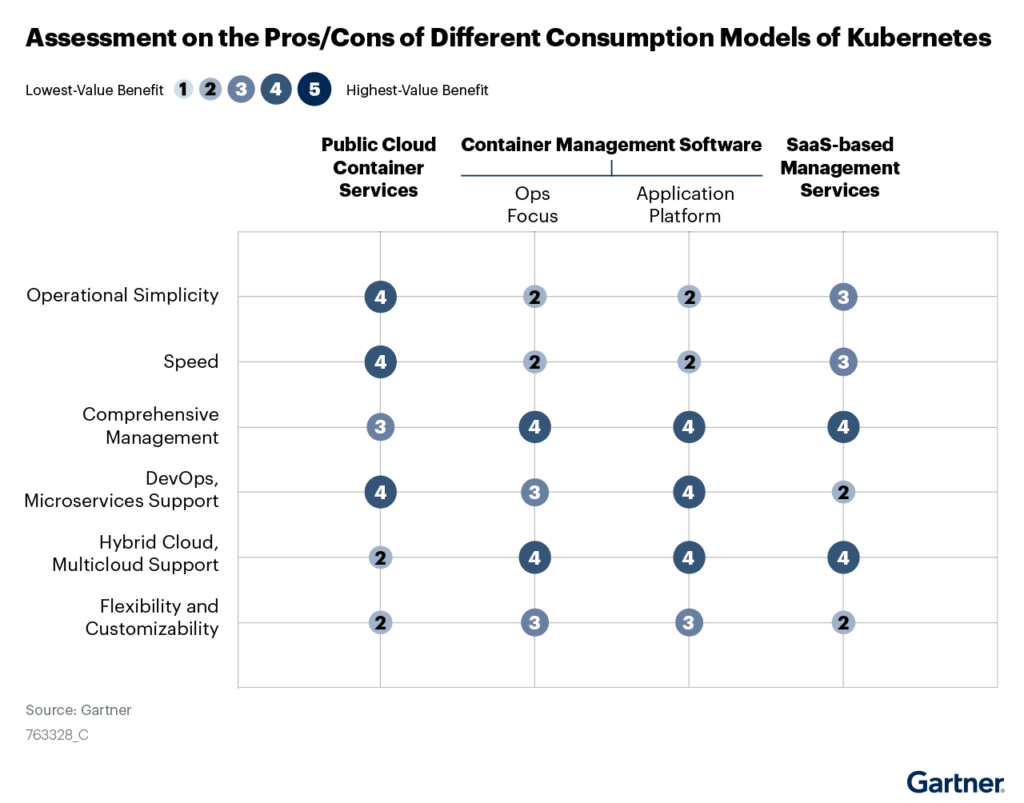

The combination of open source and COTS applications for Kubernetes and containers provides organizations several deployment options:

Are there benefits to containers and Kubernetes?

Containers offer multiple benefits for organizations that specifically run cloud native architectures. They provide agile application development and deployment, environmental consistency, and immutability.

As Kubernetes runs on top of the container software, it offers flexibility and choice.

“Kubernetes is supported by a huge ecosystem of cloud providers, ISVs and IHVs [independent hardware vendors]. This API and cross-platform consistency, open-source innovation and industry support offers a great degree of flexibility for CTOs,” the Gartner authors state.

What are Kubernetes’ technological limitations?

Platform complexity is definitely something that CTOs and IT managers must acknowledge, especially as containers and Kubernetes aren’t optimal for every possible use case. These technologies work best with dynamic, scalable environments, and add complexity if engineers attempt to use them to manage static, COTS applications.

What Skills Are Needed for a Successful Kubernetes Deployment?

A big part of successful container and Kubernetes implementation is making sure that there are the proper teams and skill sets in place to manage and run the technology. Organizations should invest in a variety of core and secondary roles that cover security, platform operations, reliability engineering, as well as build and release engineering. These designations can help ensure a Kubernetes deployment is secure, reliable and consistently developed for the organization at scale.

Roles and responsibilities for teams running Kubernetes:

The development team should be tasked with coding, application design, implementation and testing, as well as source code management.

A platform engineering team oversees platform selection, installation, configuration, and administration. The members should also be able to maintain base images, integrate the DevOps pipeline, enable self-service capabilities for developers, automate container provisioning, and provide capacity planning and workload isolation.

Reliability engineers work on security, monitoring and performance aspects. They should focus on application resiliency; be able to debug and document production issues; and be responsible for incident management and response.

Lastly, the build and release engineering team chooses the CI/CD deployment pipeline, develops templates for new services, educates development teams, and creates dashboards to measure efficiency and productivity.

How can organizations measure the ROI of containers?

“Ensuring ROI by building a thorough business case is important to validate that you aren’t

investing in containers and Kubernetes purely because it is a shiny new technology.

Organizations need to take a realistic view of the costs incurred and potential benefits,” the report authors write.

The benefits of containers that organizations can measure include developer productivity, an agile CI/CD environment, infrastructure efficiency gain, and a reduced operational overhead.

Potential costs that could cut into benefits are container-as-a-service/platform-as-a-service (CaaS/PaaS) license fees; additional software licenses for security, automation, and monitoring tools; infrastructure investment costs; hiring new staff to run such deployments; as well as professional implementation services to get everything online and running smoothly.

What steps does Gartner recommend to technology leaders?

The Gartner authors recommend that technology leaders should:

- Ensure that a strong business case exists, identify appropriate use cases and institute a DevOps culture before committing to and scaling Kubernetes platform environments.

- Create a platform team that curates platform selection, drives standardization, and automation of DevOps functions, and collaborates with developers to foster cloud native architectures.

- Choose packaged software distributions or cloud-managed services for production deployments that integrate different technology components, simplify the life cycle management of that stack, and provide multicloud management, rather than adopting a DIY approach.

- Measure and communicate the benefits accurately, both in terms of technical metrics like software velocity, release success, and operational efficiency gain — as well as through business metrics, such as top-line growth, and customer satisfaction.

Containers and Kubernetes provide a clear technological foundation for organizations and IT leaders that want to run cloud native architectures and bring legacy applications into the 21st century. Though for a successful deployment, and a healthy ROI, organizations should make sure the right applications and people are in place.

Interested in how containers and Kubernetes are essential for cloud native applications? Contact us for a demo today.

Share This:

Table Of Contents

Most Recent:

Ready to see it in action?

Request a demo for an in depth walk through of the platform!